MONTRÉAL

June 18-22, 2018Palais des congrès

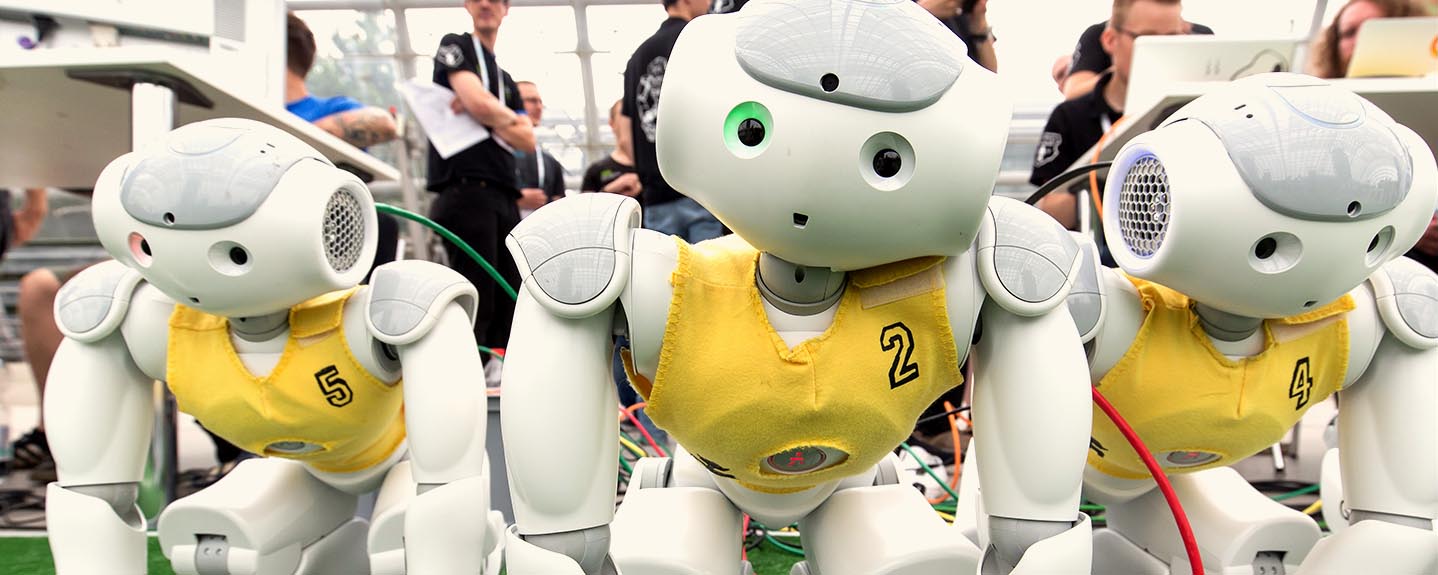

Montréal, a city named Intelligent Community of the Year, is looking to thrive on the latest advancements in artificial intelligence. The RoboCup Federation inspires students from age 11 to Postdoctoral research and development to excel in the industries of science, technology, engineering, arts, and math through robotics.

-

COUNTRIES

-

HUMANS

-

ROBOTS

What is RoboCup?

RoboCup takes pride in being the incubator and leaders in autonomous Artificial Intelligence research with passionate and talented people to drive robotics and machine learning worldwide

location

Located in Montréal’s downtown core, the convention center is in a central location with everything within walking distance. Nearby, you will find the city’s business center, international district, entertainment district, shopping district, historic old Montréal, Chinatown, Science center, many activities, and festivals.

go exploring

A striking union of European charm and North American attitude, Montréal attracts visitors to a harmonious pairing of the historic and the new, from exquisite architecture to fine dining. From Top Universities to Leading Industries.